In my last post (Understanding AI

Terminology: A Practical Guide), we sorted out the AI vocabulary to cut through the vendor fog. But

knowing the terms is the easy part. The real headache for engineering leadership right now isn't defining what

an LLM is—it's the brutal reality of putting one into production to write idiomatic code, debug concurrent

systems, and actually solve problems.

Right now, the holy grail for enterprise engineering is the autonomous software delivery pipeline: an

architecture where an AI ingests incoming Jira issues or GitHub bugs, investigates the codebase, writes the

necessary Go code, runs the test suite, and opens a passing Pull Request without human intervention. The

vendor prototypes for this always look flawless. In reality, production rollouts usually end up crushed by

runaway costs, massive latency, and agents accidentally breaking builds or hallucinating non-existent

standard library packages.

If we want to get past the hype and build an autonomous engine that actually works, we have to stop treating

AI like a science experiment and start treating it like a messy distributed systems problem. Here is how you

actually wire these blocks together, and the architectural traps you'll inevitably step in along the way.

The Prerequisites: Training Time vs. Inference Time

Before we get into the weeds, let's clear up a massive misunderstanding I hear from the business side constantly: how these models actuallylearnyour company's proprietary code. As architects, we have to draw a hard line between Training Time and Inference Time.

Training Time

Training Time is the initial, computationally heavy phase where the base model is built. It involves crunching public code across huge GPU clusters over months. You are not training a model on your company's private repositories every time a developer merges a new feature.Inference Time

Inference Time is the live, operational phase. This is the AI agent actively reading a Jira ticket, ready to write Go code.

When you want an AI to write code using your internal packages, you don't train it—you hand it specific

snippets and API docs right before asking it to generate the code during Inference. That data is the model's

Context Window. And here is the core architectural constraint of this entire process: you pay a premium API

fee, and incur a massive latency penalty, for every single line of code you shove into that working memory.

Core Integration Patterns: Wiring the Resolution Engine

Retrieval-Augmented Generation (RAG): Finding the Right Context

This brings us to the first major hurdle: trying to cram the entire enterprise monorepo into the context window.

If a bug report says,

Panic: nil pointer dereference in the auth handler, a naive architecture dumps every authentication file from the last five years into the prompt. The AI gets overwhelmed, misses the specific middleware layer updated yesterday, and you just paid to process 50,000 tokens of irrelevant boilerplate.

RAG solves this by ensuring you only send the AI exactly the

.go files and Architecture

Decision Records (ADRs) it needs via semantic search.

The Catch: Keeping your vector database synced with your main branch is notoriously

difficult. If a senior engineer refactors a core interface, your vector database needs that update

immediately. Without robust webhooks streaming commits into your embedding pipeline, your AI will

confidently write code using a v1 struct that your platform team deleted three days ago.

Pro-tip: Don't block your CI pipeline to update embeddings. Toss GitHub push webhooks into a background worker pool (likeasynqormachineryin Go) to re-embed files asynchronously.

Semantic Routing: The Intelligent Dispatcher

Not every engineering task requires the heavy computing power of a state-of-the-art flagship model. Using a massive, expensive LLM to write a basichttp.HandlerFunc or generate JSON tags for a struct is just

burning cloud credits.

Semantic routing puts a dispatcher system in front of your expensive models to triage incoming GitHub

webhooks.

The Catch: Misclassification will bite you. You use a small, fast AI to read the Jira

ticket and decide which model gets the job. If the router mistakenly flags a nasty goroutine leak as a

quick comment update, it sends the job to a cheap model that isn't smart enough to fix it.

Pro-tip: Don't use an LLM for routing if anifstatement will do the job. If the incoming Jira epic already has thedocs-onlylabel, route it directly to the cheaper model using standard logic and save the API call entirely.

Prompt Chaining: The Automated Assembly Line

When you need an AI to reliably perform a multi-step development task, you have to lock it down with Prompt Chaining. While LLMs are great at creative reasoning, CI/CD pipelines require strict compliance.

The Catch: Think about scaffolding a new Go microservice. You absolutely cannot let the AI

freely guess how to structure the repository. You chain it:

- Step 1: extracts data requirements

- Step 2: queries the internal gRPC schema registry

- Step 3: generates the struct models

- Step 4: runs

go mod initand generates standard project layouts

Pro-tip: Write your chains as standard Go interfaces so you can mock the LLM generation steps during unit testing. You want to test your pipeline's logic, not the AI's mood on a Tuesday.

Tool Use (Function Calling): Giving the Bot Hands

This is the exact moment your AI assistant goes from a read-only autocomplete toy to a write-access liability.

When an AI

runs a test suiteor

commits code, it isn't executing terminal commands natively. It's generating a highly specific JSON payload asking your backend to execute the command on its behalf (e.g.,

{"action": "execute_go_test", "package": "./internal/auth", "flags": "-v -race"}).

The Catch: To resolve an issue autonomously, you must give the LLM a directory of tools.

Prepare to spend 40% of your development time writing robust middleware just to catch the AI's syntax errors

and manage API timeouts.

Pro-tip: Never trust the JSON payload an LLM hands you. Define your tool schemas using

strict Go structs, validate them with something like go-playground/validator, and treat the

payload with the same suspicion you would treat input from a public web form.

Autonomous Agents: The Debugging Loop

In an agentic architecture, you stop hardcoding the steps entirely. You give the AI a core goal (Fix Bug #9902), a set of repository tools, and awhile loop.

The Catch: Agents are incredible for deep, ambiguous code problems, but they are also

stubborn. If your test framework returns a stack trace format the agent doesn't understand, it might spend

45 minutes repeatedly rewriting the exact same goroutine, failing the exact same test, until it drains your

cloud budget.

Pro-tip: Implement a hard circuit breaker. If an agent takes more than 5 minutes or 5

tool-calls to debug a failing test, gracefully cancel the context (context.WithTimeout) and

escalate to a human immediately.

Model Context Protocol (MCP): The Universal Infrastructure Plug

To pull all this together, you have to connect your AI to GitHub, Jira, SonarQube, and AWS. Writing custom API wrappers for every single integration is a recipe for brittle, unmaintainable infrastructure. Enter the Model Context Protocol (MCP)—essentially the USB-C standard for AI systems.

The Catch: Building a dedicated MCP server that exposes a standardized

create_pr tool requires a significant upfront engineering investment. However, it fundamentally

decouples your AI logic from your DevOps toolchain.

Pro-tip: Run your custom MCP servers as sidecars in your Kubernetes pods right next to your AI agent services. It keeps network latency virtually non-existent and lets you use standard K8s network policies to lock them down.

Day 2 Operations: Keeping the Bot from Breaking Production

Escalation: The Human Safety Net

No AI should have a 100% autonomous commit mandate on day one. They hallucinate, misunderstand requirements, and generate tech debt. Escalation isn't a failure state; it's a mandatory architectural feature.

You must define strict confidence thresholds. If an autonomous agent fails its test-driven development loop

three times, it must instantly assign the PR to a human Senior Engineer.

Pro-tip: Do not just dump a massive wall of raw JSON logs on the developer. Build a final prompt chain that generates a clean Markdown table summarizing theAttempted Fixes, the specific failing tests, and the hypothesized root cause. Developers skim; give them the context in 10 seconds.

Observability: Tracing the Black Box

Traditional Application Performance Monitoring (APM) tracks CPU spikes. AI observability requires you to track the bot'sreasoning.

When an AI confidently resolves a failing test by doing the exact wrong thing, your engineers need to know

why. Without trace logging, you won't know that the AI fixed a failing unit test by simply deleting the

assert.NoError(t, err) statement.

Pro-tip: Inject a unique TraceID into the context at the very start of the

webhook handler. Pass that context down through every single LLM call, retrieval, and tool execution so you

can visualize the lifecycle in tools like LangSmith or DataDog.

Cost Controls: Implementing AI FinOps

A standard CI pipeline costs roughly the same every time it runs. An AI agent does not. Generating a single struct definition might consume 1,000 tokens, while a complex agentic refactoring loop might chew through 150,000 tokens in three minutes.Pro-tip: Do not wait for the end-of-month invoice to find out an agent went rogue. Set up real-time cloud billing anomaly alerts that trigger PagerDuty if your daily LLM provider spend spikes unexpectedly.

The Danger Zone: Where Autonomous Development Fails

Let's be completely honest: giving an AI the ability to read private codebases and execute build commands introduces massive operational risks. You have to account for these specific failure modes:-

The

Lost in the Middle

Syndrome: If you feed an LLM a massivehandler.gofile with thousands of lines, it tends to remember the beginning and the end of the text, but completely ignores or hallucinates the code buried in the middle. The Fix: Keep the context window small. Rely on aggressive RAG to send the model only the exact, isolated functions it needs. -

The

Permission Bypass

: A junior developer asks an internal AI assistant how a specific data pipeline works. Because the vector database lacks Role-Based Access Control (RBAC), the AI happily pulls code from a private repository containing proprietary trading algorithms. The Fix: Pass the developer's IAM token into the vector search query so the AI can only retrieve what the user is explicitly authorized to view. -

The

Shredder Effect

: Cutting Go code blindly by character count to fit into a vector database is a disaster. If you slice a large struct definition in half, or separate a struct from its receiver methods, the AI loses all context. The Fix: Write logic in your ingestion pipeline to parse the Go Abstract Syntax Tree (usinggo/ast) so structs, interfaces, and methods are preserved as single, logical units in the database. - The God-Mode MCP Server: Because MCP makes it easy to expose infrastructure, the temptation is to build one giant server that has admin access to both GitHub and your AWS production environment. The Fix: Treat MCP servers like microservices. Scope them aggressively with least-privilege access. The server that reads Jira tickets must not be the same server holding the keys to merge to main.

A Final Note on Architecture

I'll leave you with this: the biggest myth in enterprise software right now is that AI bots require

fundamentally new engineering rules. They don't.

From an architecture perspective, an LLM is simply an external, third-party API that happens to be

exceptionally slow, highly expensive, and prone to returning bad data. Therefore, you must wrap it in the

exact same defensive engineering patterns you use for any other unreliable microservice. Put an API Gateway

in front of it, cache the frequent queries, enforce hard timeouts on the agent loops, and build fallback

routing to human engineers for when the model inevitably gets confused.

We need to stop treating AI like magic, and get back to engineering.

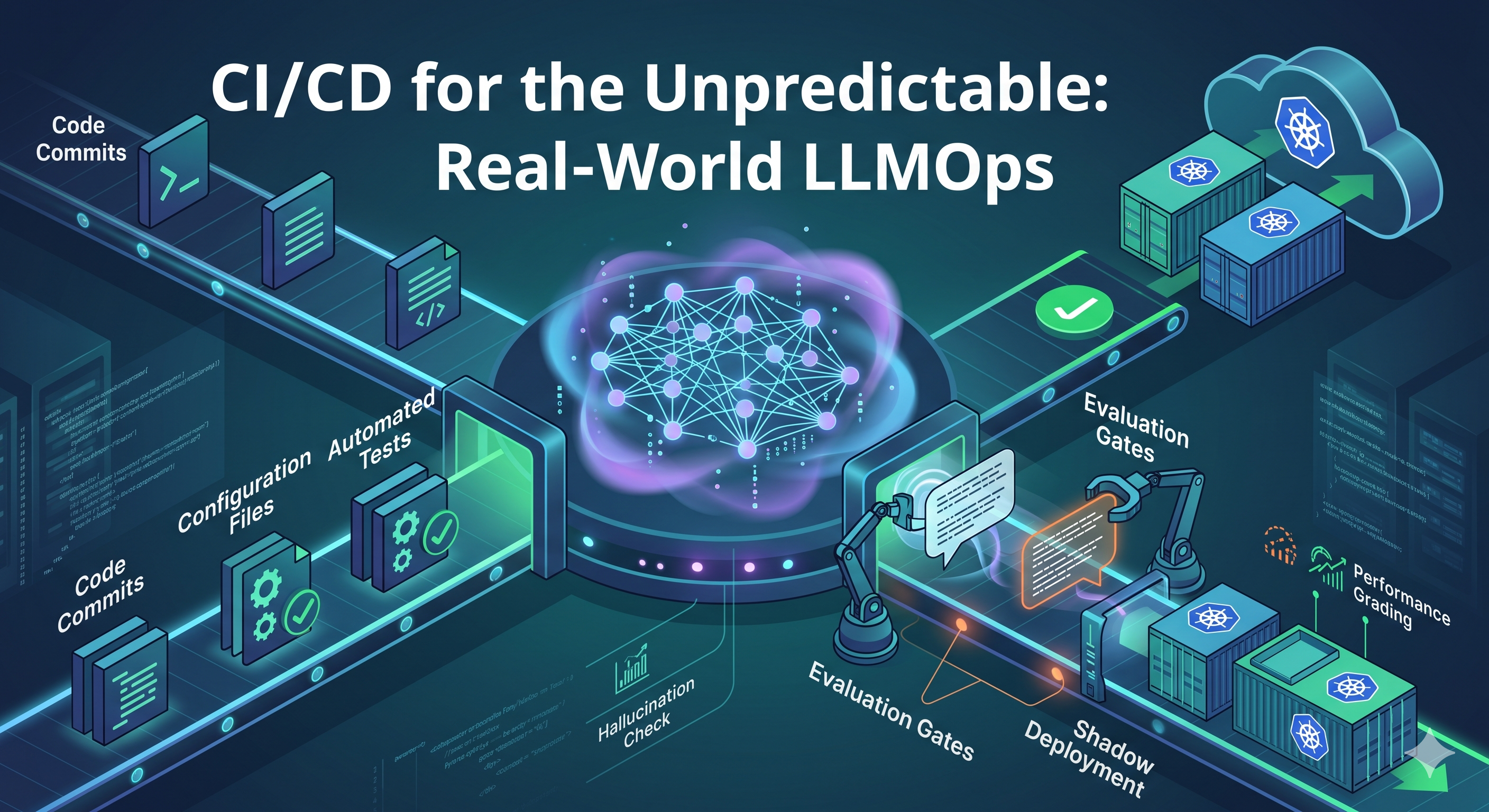

CI/CD for the Unpredictable: Real-World LLMOps

CI/CD for the Unpredictable: Real-World LLMOps

Post a Comment

Post a Comment