In my last couple of posts, (Understanding AI

Terminology: A Practical Guide) and

(Architectural Patterns for AI Systems), we got past the buzzwords and looked at the architectural

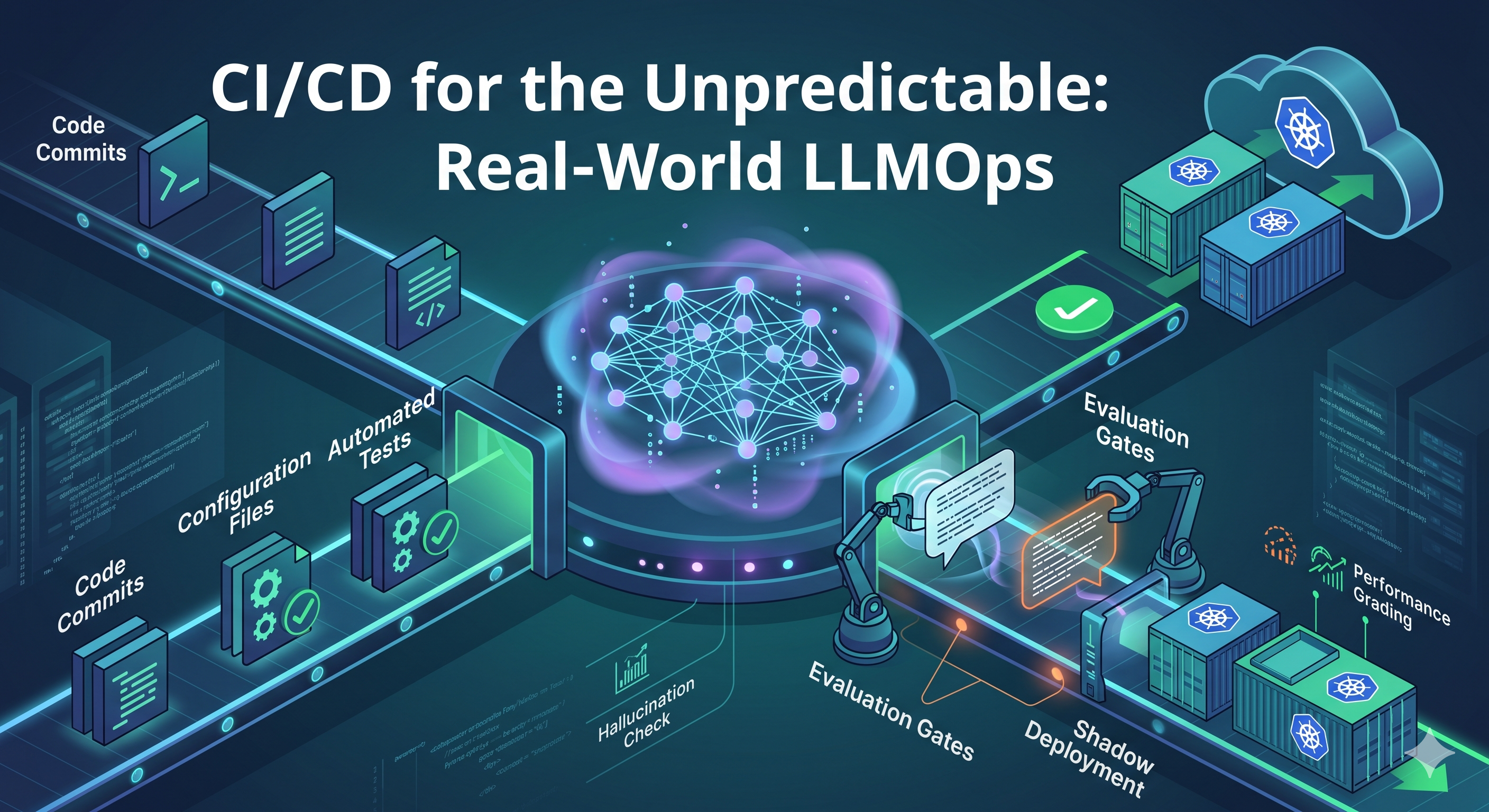

blueprints for bringing generative AI into your stack. Now let's talk about the messy part: actually

putting this stuff in production and keeping it alive.

For the bulk of our careers, we've built deployment pipelines to do exactly one thing: act painfully

predictable. We lock versions, containerize our environments, and write rigorous unit tests because we

expect that if we put State A in, we get Output B out. Every single time.

Then, the business says,

Let's add AI!and suddenly, we're hooking our rock-solid, deterministic pipelines up to a probability engine that might literally change its mind on a Tuesday.

When your API relies on an LLM, the model will drift, hallucinate, and degrade over time - even if you

haven't touched a single line of application code. If you treat a generative AI call like a standard REST

API fetch, you're basically asking for a midnight outage.

To move these features out of the prototype phase and into resilient enterprise systems, we have to rethink

how we deploy. Here are a few foundational patterns I've learned the hard way.

Stop Treating Prompts Like Config Strings

The most common anti-pattern I see in early AI adoption is teams treating prompts like feature flags,

dynamic config strings, or just hardcoding them directly into a service class.

Prompts aren't just text; they are highly sensitive executable logic. Tweaking a single adjective can

completely blow up the JSON output your downstream services are relying on.

Think about an AI invoice parser where the downstream code strictly expected a JSON payload like

{"total": 500.00}. At 4 PM on a Friday, someone decided the bot's internal persona needed to

be "friendlier" and hot-fixed the system prompt in the database. Suddenly, our backend was trying to parse:

Here is your data! {"total": 500.00}. Have a great weekend!

Every downstream service threw a

JSONDecodeError, PagerDuty screamed, and we spent three hours

tracking down a single polite adjective.

How to fix this:

- Put them in Git: System prompts belong in version control. Changing a prompt should require a Pull Request, a peer review, and a clear commit history.

- Bundle the Artifacts: A prompt is useless without its context. "Prompt v2" might work beautifully on a specific model version but fail miserably when the provider silently updates the model weights next month. Your deployment artifact needs to bundle the prompt, the specific model version, and the hyper-parameters as one immutable object.

A quick tip on architecture: Decouple your prompt deployments from your core application deployments. If a prompt goes rogue, you need to be able to roll it back instantly. You don't want to wait for a 20-minute CI/CD build and Kubernetes pod restarts just to fix a hallucinating word. Use a lightweight prompt registry or pull configurations from a versioned blob storage bucket at runtime.

Throwing Out the Unit Test

How do you write an

assertEquals() when there are ten thousand valid ways for a model to answer

a question? You can't. To automate testing for non-deterministic systems, we have to shift from binary

assertions to grading rubrics.

Imagine you have a customer support bot, and a user asks,

How long do I have to return these shoes?Your human-verified

Golden Answerin your test suite is:

Returns are allowed within 30 days.

You deploy a new prompt, and the bot now answers:

You have one month to send them back.

A traditional regex unit test checking for the string

30 days will flag this as a catastrophic

failure and halt your pipeline. But functionally? The bot gave a perfect answer.

Instead of regex, you need a curated baseline of historical user inputs paired with perfect outputs. When a

dev tweaks a prompt, your CI pipeline triggers a run against that baseline using a secondary, isolated LLM

to "grade" the primary model's work (LLM-as-a-Judge). You ask the judge:

Did this lose the original meaning? Is it strictly valid JSON? Is the tone correct?

The pragmatic reality: Running GPT-4 as a judge on every single commit will destroy your

build times and your budget. Implement tiered evaluations. Use a smaller, faster model for your PR checks

(for example: did it output JSON? is it toxic?), and reserve the expensive, full-scale semantic evaluation

against your golden dataset for nightly builds or pre-production release candidates.

Shadow Deployments (Testing Without the Blast Radius)

Let's be honest, users will always find ways to break things you didn't test for. You simply can't predict

every edge case. The final stage of testing has to happen in production, but we can't expose users to the

blast radius of a bad deployment.

A team I know was migrating from an expensive LLM to a faster, cheaper model to save on API costs. It

passed all internal QA. But instead of flipping the switch, they piped duplicate requests to an async queue

and ran the new model in "shadow mode" for three days.

Looking through their Datadog logs, they realized the cheaper model completely misunderstood European date

formats

(DD/MM/YYYY). Real users were asking for expenses from 04/05/2023(May 4th), and the shadow model was hallucinating data for April 5th. Because it was in shadow mode, zero customers saw the hallucinated data.

When you deploy a heavily refactored prompt or migrate to a new model, use shadow routing:

- Keep routing real user traffic to your existing, stable setup (v1).

- Use an async event bus to duplicate incoming requests and send them to your new setup (v2) in the background.

- The user gets a reliable response from v1, while you log what v2 would have said and compare asynchronously.

Shadow traffic generates massive amounts of data quickly. You don't need to shadow 100% of your production

firehose to get statistical confidence. Sampling just 5% or 10% of requests for 48 hours is usually enough

to catch regressions. Just remember to put a strict TTL (Time to Live) on shadow logs so you don't blow up

storage costs.

Final Thought

Plugging an LLM into your stack trades deterministic reliability for incredible flexibility. You can't stop

a large language model from occasionally acting weird. What you

cando is build the scaffolding that catches the weirdness before it reaches the user, isolates the damage, and gives you the telemetry to iterate safely.

If you don't have an automated evaluation pipeline, you don't really have a production-ready AI feature -

you just have a very expensive liability waiting to trigger an incident.

Stop treating prompts like config strings. Start building resilient LLMOps.

Post a Comment

Post a Comment